March 7, 2019

Stop trying to hit me

At the end of this month, in keeping with the horrifying march of time, The Matrix turns 20 years old. It's hard to overstate how mind-blowing it was for me, a high-schooler at the time, when the Wachowski sisters' now-classic marched into theaters: combining entirely new effects techniques with Hong Kong wire-work martial arts, it's still a stylish and mesmerizing tour de force.

The sequels... are not. Indeed, little of the Wachowski's post-Matrix output has been great, although there's certainly a die-hard contingent that argues for Speed Racer and Sense8. But in rewatching them this month, I've been struck by the ways that Reloaded and Revolutions almost feel like the work of entirely different filmmakers, ones who have thrown away one of their most powerful storytelling tools. By that, I mean the fight scenes.

The Matrix has a few set-piece fight scenes, and they're not all golden. The lobby gunfight, for example, doesn't hold up nearly as well on rewatch. But at their best, the movie's action segments deftly thread a needle between "cool to watch" and "actively communicating plot." Take, for example, the opening chase between Trinity, some hapless cops, and a pair of agents:

In a few minutes, we learn that A) Trinity is unbelievably dangerous, and B) however competent she is, she's utterly terrified by the agents. We also start to see hints of their character: one side engaged in agile, skilled hit-and-run tactics, while the authorities bully through on raw power. And we get the sense that while there are powers at work here, it's not the domain of magic spells. Instead, Trinity's escape bends the laws of time and space — in a real way, to be able to manipulate the Matrix is to be able to control the camera itself.

But speaking of rules that are can be bent or broken, we soon get to the famous dojo training sequence:

I love the over-the-top kung fu poses that start each exchange, since they're such a neat little way of expressing Neo's distinct emotional progress through the scene: nervousness, overconfidence, determination, fear, self-doubt, and finally awareness. Fishburne absolutely sells his lines ("You think that's air you're breathing now...?"), but the dialog itself is almost superfluous.

The trash-as-tumbleweed is a nice touch to start the last big brawl of the movie, as is the Terminator-esque destruction of Smith's sunglasses. But pay close attention to the specific choreography here: Smith's movements are, again, all power and no technique. During the fight, he hardly even blocks, and there aren't any fancy flips or kicks. But halfway through, after the first big knock-down, Neo starts to use the agent's own attack routines against him, while adding his own improvisations and style at the end of each sequence. One of these characters is dynamic and flexible, and one of them is... well, a machine. We're starting to see the way that the ending will unfold, right here.

What do all these fight scenes have in common? Why are they so good? Well, in part, they're about creating a readable narrative for each character in the shot, driving their action based on the emotional needs of a few distinct participants. Yuen Woo Ping is a master at this — it's practically the defining feature of Crouching Tiger, Hidden Dragon, on which he did fight direction a year later and in which almost every scene combines character and action almost seamlessly. Tom Breihan compares it to the role that song-and-dance numbers play in a musical in his History of Violence series, and he's absolutely right. Even without subtitles or knowledge of Mandarin, this scene is beautifully eloquent:

By contrast, three years later, The Matrix Reloaded made its centerpiece the so-called "burly brawl," in which a hundred Agent Smiths swarm Neo in an empty lot:

The tech wasn't there for the fight the Wachowskis wanted to show — digital Keanu is plasticky and weirdly out-of-proportion, while Hugo Weaving's dopplegangers only get a couple of expressions — but even if they had modern, Marvel-era rendering, this still wouldn't be a satisfying scene. With so many ambiguous opponents, we're unable to learn anything about Neo or Smith here. There's no mental growth or relationship between two people — just more disposable mooks to get punched. "More" is not a character beat. But for this movie and for Revolutions, the Wachowskis seemed to be convinced that it was.

At the end of the day, none of that makes the first movie any less impressive. It's just a shame that for all the work that went into imitating bullet time or tinting things green, almost nobody ripped off the low-tech narrative choices that The Matrix made. Yuen Woo Ping went back to Hong Kong, and Hollywood pivoted to The Fast and the Furious a few years later.

But not to end on a completely down note, there is one person who I think actually got it, and that's Keanu Reeves himself. The John Wick movies certainly have glimmers of it, even if the fashion has swung from wuxia to MMA. And Reeves' directorial debut, Man of Tai Chi is practically an homage to the physicality of the movie that made him an action star. If there is, in fact, a plan to reboot The Matrix as a new franchise, I legitimately think they should put Neo himself in the director's chair. It might be the best way to capture that magic one more time.

February 13, 2019

Sunshine

Sunshine wasn't particularly loved when it was released in 2007, despite a packed cast and direction by Danny Boyle. In the years since, it has somehow stubbornly avoided cult status — before its time, maybe, or just too odd, as it swings wildly between hard sci-fi, psychological drama, survival horror, and eventually straight-up slasher flick by way of Apocalypse Now. But it's intensely watchable and, I would argue, underappreciated, especially in comparison to writer Alex Garland's follow-up attempts on the same themes.

"Our sun is dying," Cillian Murphy mutters at the start of the film, and the tone remains pretty grim from there. The spaceship Icarus II is sent on a desparate trip to restart the sun by tossing a giant cubic nuclear bomb into it — a desparate quest, made all the more desparate by the fact that nobody on the mission seems particularly stable or well-suited to the job. Boyle sketches out each crew member quickly but adeptly, giving each one a well-defined (if sometimes precious) persona, like the neurotic psychologist, the hot-tempered engineer, or the botanist who cares more for her oxygen-producing plants than the people onboard (or, viewers suspect, the mission itself). NASA would never put these people in a small space for more than a day, but they're a marvel of small-scale human conflict almost from the very start.

That approach to character is emblematic of Sunshine's construction, which is really less of a plot and more of a set of simple machines rigged in opposition to each other. An early miscalculation in the position of the ship's sun shield leads to a series of cascading crises, each of which provides both physical challenge as well as ratcheting tension among the crew from dwindling resources. Yet there's only one real plot twist in the whole thing: the murderous captain Pinbeck of Icarus I, driven mad by his own journey toward the sun. Everything else is established clearly and methodically, with ample recall and signposting — it's the rare science fiction movie that doesn't cheat. Even Pinbeck's slasher-esque rampage shows up in clues for savvy viewers, who can clock a missing scalpel and scattered bloody handprints on rewatch.

Similar to an obvious inspiration (and personal favorite), Alien, one of the film's greatest special effects is the cast. Boyle gets a lot of mileage out of Cillian Murphy's After Effects-blue eyes, but you can't go wrong with Chris Evans, Michelle Yeoh, Benedict Wong, and Rose Byrne. Still, for my money, Cliff Curtis is the film's MVP: as the doctor/psychologist Searle, he's both bomb-thrower and mediator in equal measures. His obsession with the sun leaves him visibly burned, like a Dorian Gray painting of the crew's mental health. And yet, unlike Pinbeck (who he clearly parallels), Curtis manages to keep his perspective straight and a wry sense of humor — he may love the light, but he's not blinded by it.

So why isn't Sunshine canonized, especially in a climate-change world where "our sun is dying" passes for optimism? Why is it considered a misfire, when Garland's flawed Annihilation was seen as a cult hit in the making? It's still not clear to me. Maybe it just got lost in the shuffle: 2007 was a good year for movies, including There Will Be Blood for the serious film aficianados and The Bourne Ultimatum or Death Proof for surprisingly well-crafted genre fans. Or maybe it's also just too close to its nearest relatives: too easy to write off as "Event Horizon without the schlocky fun" or "Solaris, but for stupid people." Either way, it feels overdue for reconsideration.

December 14, 2018

Lightning Power

This post was originally written as a lightning talk for SRCCON:Power. And then I looked at the schedule, and realized they weren't hosting lightning talks, but I'd already written it and I like it. So here it is.

I want to talk to you today about election results and power.

In the last ten years, I've helped cover the results for three newsrooms at very different scales: CQ (high-profile subscribers), Seattle Times (local), and NPR (shout out to Miles and Aly). I say this not because I'm trying to show off or claim some kind of authority. I'm saying it because it means I'm culpable. I have sinned, and I will sin again, may God have mercy on my soul.

I used to enjoy elections a lot more. These days, I don't really look forward to them as a journalist. This is partly because the novelty has worn off. It's partly because I am now old, and 3am is way past my bedtime. But it is also in no small part because I'm really uncomfortable with the work itself.

Just before the midterms this year, Tom Scocca wrote a piece about the rise of tautocracy — meaning, rule by mulish adherence to the rules. Government for its own sake, not for a higher purpose. When a judge in Nebraska rules that disenfranchising Native American voters is clearly illegal, but will be permitted under regulations forbidding last-minute election changes — even though the purpose of that regulation is literally to prevent voter disenfranchisement — that's tautocracy. Having an easy election is more important than a fair one.

For those of you who have worked in diversity and inclusion, this may feel a little like the "civility" debate. That's not a coincidence.

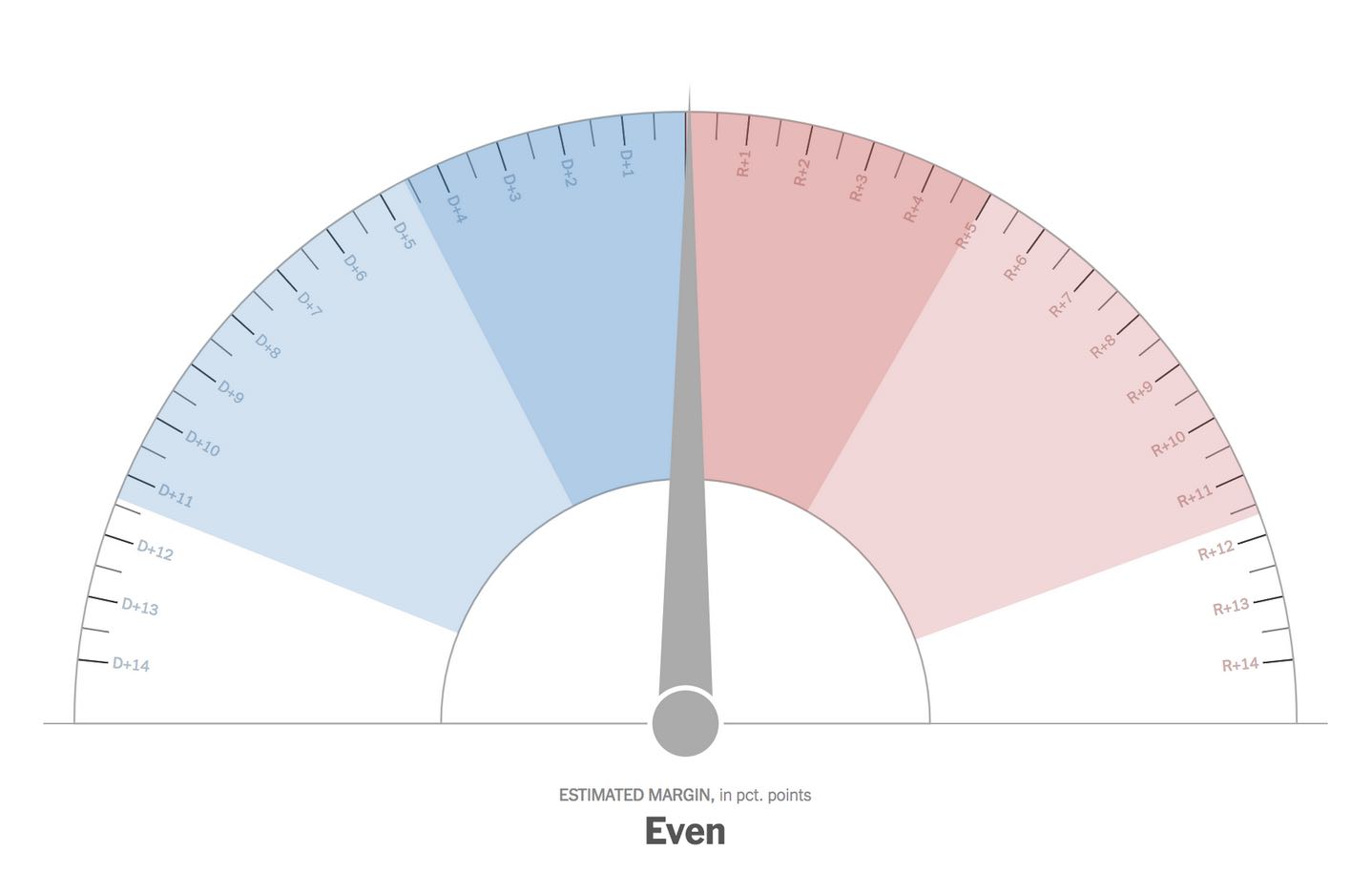

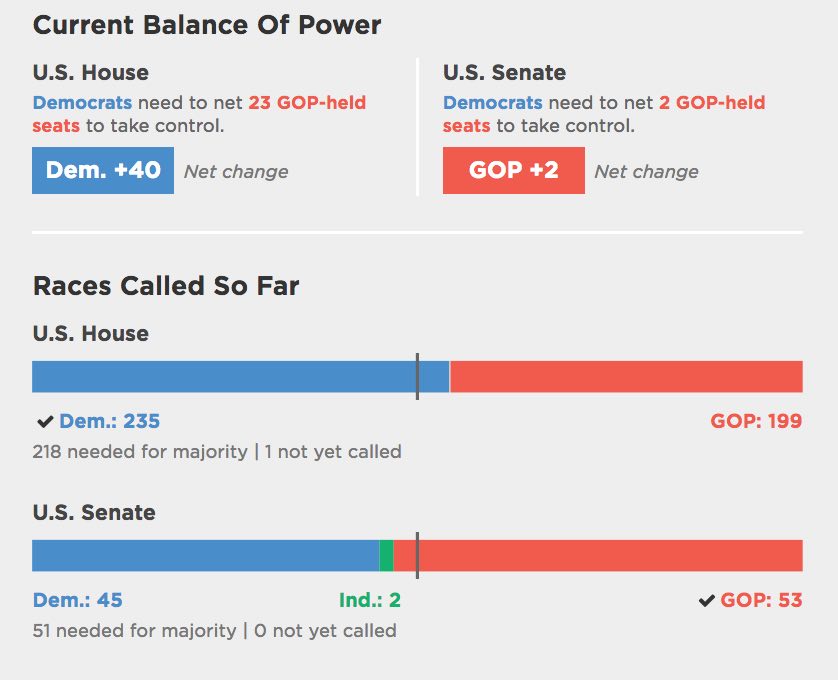

I am concerned that when we cover elections with results pages and breaking alerts, we're more interested in the rules than we are in the intended purpose. It reduces the election to the barest essence — the score, like a football game — divorced from context or meaning. And we spend a tremendous amount of redundant resources across the industry trying to get those scores faster or flashier. We've actually optimized for tautocracy, because that's what we can measure, and you always optimize for your metrics.

But as the old saying goes, elections have consequences. Post-2016, even the most privileged and most jaded of us have to look around at a rising tide of white nationalism and ask, did we do anything to stop this? Worse, did we help? That's an uncomfortable question, particularly for those of us who have long believed (incorrectly, in my opinion) that "we just report the news."

Take another topic, one that you will be able to sell more easily to your mostly white, mostly male senior editors when you get back: Every story you run these days is a climate change story. Immigration, finance, business, politics both internal and domestic, health, weather: climate isn't just going to kill us all, it also affects nearly everything we report on. It's not just for the science stories in the B section anymore. Every beat is now the climate beat.

Where was climate in our election dashboard? Did anyone do a "balance of climate?"

How will electoral power be used? And against who?

Isn't that an election result?

What would it look like if we took the tremendous amount of duplicated effort spent on individual results pages, distributed across data teams and lonely coders around the country, and spent it on those kinds of questions instead?

The nice thing about a lightning talk is that I don't have time to give you any answers. Which is good, because I'm not smart enough to have any. All I know is that the way we're doing it isn't good enough. Let's do better.

Thank you.

[SPARSE, SKEPTICAL APPLAUSE]

November 10, 2018

Loot Pack

If you wanted to look at the general direction of AAA game development, you could do worse than God of War and Horizon: Zero Dawn (concidentally, of course, the last two titles I finished on my usually-neglected PS4). They're both big-budget tentpole releases, with all the usual caveats that come with it: graphically rich and ridiculously detailed, including high-priced voice/acting talent, but not particularly innovative in terms of gameplay. But even within this space, it's interesting to see the ways they diverge — and the maybe-depressing tricks they share.

Of the two, Horizon (or, as Belle dubbed it for some reason, Halo: Dark Thirty) is the better game. In many ways, it's built on a simplified version of the A-life principles that powered Stalker and its sequels: creating interesting encounters by placing varied opponents in open, complex environments. The landscape is gorgeous and immense, with procedurally-generated vegetation and wildlife across a wide variety of terrain with a full day-night cycle. It's pretty and dynamic enough that you don't tend to notice how none of the robotic creatures you're fighting really pay attention to each other apart from warning about your presence — you can brainwash the odd critter into fighting on your side with a special skill, but otherwise almost everything on the map is gunning for you and you alone.

In fact, Horizon's reliance on procedural generation and systems is both its strong suit and its weak point. It's hard to imagine hand-crafting a game this big (Breath of the Wild notwithstanding), but there's a huge gulf between filling a landscape according to a set of gameplay rules and depicting realistic human behavior. One-on-one conversations and the camera work around them are shockingly clumsy compared to the actual (pretty good!) voice work or the canned animation sections. Most of the time, during these scenes, I was just pressing a button to get back to mutilating robot dinosaurs. But you can see where the money went in Horizon: lots for well-rendered undergrowth, not so much for staying out of the uncanny valley.

By contrast, God of War is really interested in its characters, as close-up as possible. The actual game is not good — the combat is cramped and difficult to read, ironically because of its love affair with a single-take camera (which is kind of a weirdly pointless gimmick in a video game — almost every FPS since Half-Life is already a single-take shot). It's small and short by comparison to open-world games, with its level hand-crafted around a linear story. I was shocked at how quickly it wrapped up.

But there's no awkward "crouching-animation followed by two-shot conversation tree" here: it may be a six-hour storyline, but it's beautifully motion-captured and animated. When Jeremy Davies is on-screen as Baldur, it's recognizably Jeremy Davies — not just in the facial resemblance, but in the way he moves and the little ticks he throws in. There's maybe five characters to speak of in the whole thing, but they come through as real performances (Sindri, the dwarven blacksmith with severe neat-freak tendencies, is one of my favorites). In retrospect, I may wish I had just watched a story supercut on YouTube, but there's no doubt that it's an expensive, expressive production.

Where both games share mechanics, unfortunately, is a common trick in AAA design these days: crafting and loot systems. Combat yields random, color-coded rewards similar to an MMO, and those rewards are then used alongside some sort of currency to unlock features, skills, or equipment. It extends the gameplay by putting your progress behind a certain number of hours grinding through the combat loops, as this is cheaper than actually creating new content at the level of richness and detail that HD games demand.

If your combat is boring (God of War), this begins to feel like punishment, especially if it's not particularly well-signposted that some enemies are just beyond your reach early in the game. It bothered me less in Horizon, where I actually enjoyed the core mechanical loop, but even there playability suffered: the most interesting parts of the game involve using a full set of equipment to manipulate encounters (or recover when they go wrong), but most of that toolkit is locked behind the crafting system to start. Instead of giving players more options and asking them to develop a versatile skillset from the start, it's just overwhelmingly lethal to them for the first third of its overall length (a common problem — it's tough to create a good skill gate when so much of the game is randomized).

Ironically, while AAA games have gone toward opaque, grind-heavy loot systems, indies these days have swung more toward roguelikes and Metroidvanias: intentionally lethal designs that marry a high skill ceiling with a very clear unlock progression. It may be a far cry from Nintendo's meticulous four-step teaching structure, but since indie developers aren't occupied with filling endless square miles of hand-crafted landscape, they've sidestepped the loot drop trap. Will the big titles learn from that, or from the "loot-lite" system that underlies Breath of the Wild's breakable weapons? I hope so. But the economics of HD assets seem hard to argue with, barring some kind of deeply disruptive new trend.

October 2, 2018

Generators: the best JS feature you're not using

People love to talk about "beautiful" code. There was a book written about it! And I think it's mostly crap. The vast majority of programming is not beautiful. It's plumbing: moving values from one place to another in response to button presses. I love indoor plumbing, but I don't particularly want to frame it and hang it in my living room.

That's not to say, though, that there isn't pleasant code, or that we can't make the plumbing as nice as possible. A program is, in many ways, a diagram for a machine that is also the machine itself — when we talk about "clean" or "elegant" code, we mean cases where those two things dovetail neatly, as if you sketched an idea and it just happened to do the work as a side effect.

In the last couple of years, JavaScript updates have given us a lot of new tools for writing code that's more expressive. Some of these have seen wide adoption (arrow functions, template strings), and deservedly so. But if you ask me, one of the most elegant and interesting new features is generators, and they've seen little to no adoption. They're the best JavaScript syntax that you're not using.

To see why generators are neat, we need to know about iterators, which were added to the

language in pieces over the years. An iterator is an object with a next() method,

which you can call to get a new result with either a value or a "done" flag. Initially, this

seems like a fairly silly convention — who wants to manually call a loop function over

and over, unwrapping its values each time? — but the goal is actually to enable new

syntax once the convention is in place. In this case, we get the generic for ...

of loop, which hides all the next() and result.done checks

behind a familiar-looking construct:

for (var item of iterator) {

// item comes from iterator and

// loops until the "done" flag is set

}

Designing iteration as a protocol of specific method/property names, similar to the way that Promises are signaled via the then() method, is something that's been used in languages like Python and Lua in the past. In fact, the new loop works very similarly to Python's iteration protocol, especially with the role of generators: while for ... of makes consuming iterators easier, generators make it easier to create them.

You create a generator by adding a * after the function keyword. Within the

generator, you can ouput a value using the yield keyword. This is kind of like a

return, but instead of exiting the function, it pauses it and allows it to resume

the next time it's called. This is easier to understand with an example than it is in text:

function* range(from, to) {

while (from <= to) {

yield from;

from += 1;

}

}

for (var num of range(3, 6)) {

// logs 3, 4, 5, 6

console.log(num);

}

Behind the scenes, the generator will create a function that, when called, produces an iterator. When the function reaches its end, it'll be "done" for the purposes of looping, but internally it can yield as many values as you want. The for ... of syntax will take care of calling next() for you, and the function starts up from where it was paused from the last yield.

Previously, in JavaScript, if we created a new collection object (like jQuery or D3 selections), we would probably have to add a method on it for doing iteration, like collection.forEach(). This new syntax means that instead of every collection creating its own looping method (that can't be interrupted and requires a new function scope), there's a standard construct that everyone can use. More importantly, you can use it to loop over abstract things that weren't previously loopable.

For example, let's take a problem that many data journalists deal with regularly: CSV. In

order to read a CSV, you probably need to get a file line by line. It's possible to split

the file and create a new array of strings, but what if we could just lazily request the

lines in a loop?

function* readLines(str) {

var buffer = "";

for (var c of str) {

if (c == "\n") {

yield buffer;

buffer = "";

} else {

buffer += c;

}

}

}

Reading input this way is much easier on memory, and it's much more expressive to think

about looping through lines directly versus creating an array of strings. But what's really

neat is that it also becomes composable. Let's say I wanted to read every other line from

the first five lines (this is a weird use case, but go with it). I might write the

following:

function* take(x, list) {

var i = 0;

for (var item of list) {

if (i == x) return;

yield item;

i++;

}

}

function* everyOther(list) {

var other = true;

for (var item of list) {

if (!other) continue;

other = !other;

yield item;

}

}

// get my weird subset

var lines = readLines(file);

var firstFive = take(5, lines);

var alternating = everyOther(firstFive);

for (var value of alternating) {

// ...

}

Not only are these generators chained, they're also lazy: until I hit my loop, they do no work, and they'll only read as much as they need to (in this case, only the first five lines are read). To me, this makes generators a really nice way to write library code, and it's surprising that it's seen so little uptake in the community (especially compared to streams, which they largely supplant).

So much of programming is just looping in different forms: processing delimited data line by line, shading polygons by pixel fragment, updating sets of HTML elements. But by baking fundamental tools for creating easy loops into the language, it's now easier to create pipelines of transformations that build on each other. It may still be plumbing, but you shouldn't have to think about it much — and that's as close to beautiful as most code needs to be.

September 24, 2018

The Best of Times

About two months ago, just before sneaking out the back door so that nobody in the newsroom would try to do one of those mortifying "everyone clap for the departing colleague" routines, I sent a good-bye e-mail to the Seattle Times newsroom. It read, in part:

I'm deeply grateful to Kathy Best, who made the Interactives team possible in 2014. Kathy doesn't, I think, get enough credit for our digital operation. She was always the first to downplay her expertise in that sphere, not entirely without reason. Yet it is hard to imagine The Seattle Times taking a risk like that anymore: hiring two expensive troublemakers with incomprehensible, oddball resumes for a brand-new team and letting them run wild over the web site.

It was a gamble, but one with a real vision, and in this case it paid off. I'm proud of what we managed to accomplish in my four years here on the Interactives team. I'm proud of the people that the team trained and sent out as ambassadors to other newsrooms, so that our name rings out across the country. And I'm proud of the tools we built and the stories we told.

When I first really got serious about data journalism, the team to beat (ironically enough, now that I've moved to the Windy City) was the Chicago Tribune. It wasn't just that they did good work, and formalized a lot of the practices that I drew on at the Times. It was also that they made good people: ex-Trib folks are all over this industry now, not to mention a similar impact from the NPR visuals team that formed around many of the same leaders a few years later. I wanted to do something similar in Seattle.

That's why there was no better compliment, to my ears, than when I would talk to colleagues at other newsrooms or organizations and hear things like "you've built a pretty impressive alumni network" or "the interns you've had are really something." There's no doubt we could have always done better, but in only four years we managed to build a reputation as a place that developed diverse, talented journalists. People who were on or affiliated with the team ended up at the LA Times, San Francisco Chronicle, Philadelphia Inquirer, New York Times, and Pro Publica. We punched above our weight.

I never made a secret of what I was trying to do, but I don't think it ever really took hold in the broader organizational culture. That's a shame: turnover was high at the Seattle Times in my last couple of years there, especially after the large batch of buyouts in early 2017. I still believe that a newsroom that sees departures as an essential tool for recruiting and enriching the industry talent pool would see real returns with just a few simple adjustments.

My principles on the team were not revolutionary, but I think they were effective. Here are a few of the lessons I learned:

- Make sacrifices to reward high performers. Chances are your newsroom is understaffed and overworked, which makes it tempting to leave smart people in positions where they're effective but unchallenged. This is a mistake: if you won't give staff room to grow, they'll leave and go somewhere that will. It's worth taking a hit to efficiency in one place in order to keep good employees in the room. If that means cutting back on some of your grunt work — well, maybe your team shouldn't be doing that anyway.

- Share with other newsrooms as much as possible. You don't get people excited about working for your paper by writing a great job description when a position opens up. You do it by making your work constantly available and valuable, so that they want to be a part of it before an opening even exists. And the best way to show them how great it is to work for you is to work with them first: share what you've learned, teach at conferences, open-source your libraries. Make them think "if that team is so helpful to me as an outsider..."

- Spread credit widely and generously. As with the previous point, people want to work in places where they'll not only get to do cool work out in the open, they'll also be recognized for it. Ironically, many journalists from underrepresented backgrounds can be reluctant to self-promote as aggressively as white men, so use your power to raise their profile instead. It's also huge for retention: in budget cut season, newsroom managers often fall back on the old saw that "we're not here for the money." But we would do well to remember that it cuts both ways: if someone isn't working in a newsroom for the money, it needs to be rewarding in other ways, as publicly as possible.

- Make every project a chance to learn something new. This one is a personal rule for me, but it's also an important part of running a team. A lot of our best work at the Times started as a general experiment with a new technology or storytelling technique, and was then polished up for another project. And it means your team is constantly growing, creating the opportunity for individuals to discover new niches they can claim for their own.

- Pair experienced people and newcomers, and treat them both like experts. When any intern or junior developer came onto the Interactives team, their first couple of projects would done in tandem with me: we'd walk through the project, set up the data together, talk about our approach, and then code it as a team. It meant taking time out of my schedule, but it gave them valuable experience and meant I had a better feel for where their skills were. Ultimately, the team succeeds as a unit, not as individuals.

- Be intentional and serious about inclusive hiring and coverage. It is perfectly legal to prioritize hiring people from underrepresented backgrounds, and it cannot be a secondary consideration for a struggling paper in a diverse urban area. Your audience really does notice who is doing the writing, and what they're allowed to write about. One thing that I saw at the Times, particularly in the phenomenal work of the video team, was that inclusive coverage would open up new story leads time and time again, as readers learned that they could trust certain reporters to cover them fairly and respectfully.

In retrospect, all of these practices seem common-sense to me — but based on the evidence, they're not. Or perhaps they are, but they're not prioritized: a newspaper in 2018 has tremendous inertia, and is under substantial pressure from inside and out. Transparent management can be difficult — to actively celebrate the people who leave and give away your hard work to the community is even harder. But it's the difference between being the kind of team that grinds people down, or polishes them to a shine. I hope we were the latter.

January 30, 2018

Spilled Ink

I took a risk on Splatoon 2. Multiplayer shooters are not, generally, something I enjoy, and I'd never played the first game. Also, it's a weird concept: squid paintball? This is Nintendo's new franchise?

It turns out, yes, Splatoon is pretty great. It hits that sweet spot between the neon pop aesthetics of Jet Set Radio and the swift lethality of Quake 3, like a golden-age Dreamcast game decanted onto modern hardware (thankfully, without Sega's torture controller). And yet I'm surprised that there doesn't seem to be a lot of discussion of the game's central design mechanic, which is odd (but maybe common now that streaming has taken over from blogging).

Splatoon is not technically a first-person shooter, but it plays much like one. A typical FPS is about navigation and control of space, although the precise application of this depends on a number of other design decisions: switching from respawning power-ups to hero abilities, for example, emphasizes strategic position over an optimal path. Regardless, like many video games, play is less about the literal violence onscreen and more about range, line of sight, and predicting (or manipulating) the opponent's movement. You very much see this in the 2016 Doom reboot, where monster speed is actually quite low, and all the mechanics encourage players to rapidly pinball from one to the next in a chain of glory kills.

What Splatoon does is take all this implicit negotiation over space and make it explicit, by letting players alter the "distance" of the map with ink. All weapons in the game inflict damage, but they also paint the floor and walls with your team's color. Areas belonging to the other team damage you and slow down movement, while you can get a speed boost in your own color by swimming through the ink with the left trigger. In the primary game mode, you don't win by damaging the other team, although that helps. Instead, the winning team is the one that covered more of the stage floor when the timer sounds.

The paint mechanic is simple, but a lot of really interesting strategy falls out of it:

- Offense needs to balance broad territory coverage (slow, but stable) against aggressive movement on a narrow path (faster, but easier to cut off).

- This balance shifts throughout the match, especially since narrow paths don't establish a defensive bulwark to slow down the other team.

- Inking the walls is the best way to get height on a map, but doesn't count for points at the end of a match.

- Spraying around a player to slow them down makes them easier to target. Likewise, creating an oblique path and dashing down it is a fast (but risky) way to alter range.

- Swimming through ink refills your ammo faster than staying still. Covering new territory boosts the special meter, but you're vulnerable during most specials. Between the two, you're incentivized to constantly alternate between moving forward and dashing back.

Since players are effectively redefining space within the geometry of the level itself, and because its weapons are still aggressively short-ranged, Splatoon levels are less like the sprawling expanse of a map from Team Fortress 2 (I suspect the most of them would fit neatly in the gap between that game's historic two forts) and more like Gears of War combat bowls. They're relatively open, with long lines of sight and maybe a few chokepoints to force the teams to interact. The question isn't whether you can find the other players, because you almost certainly can. It's whether you can reach them, and what paths you'll take (or create) to get there.

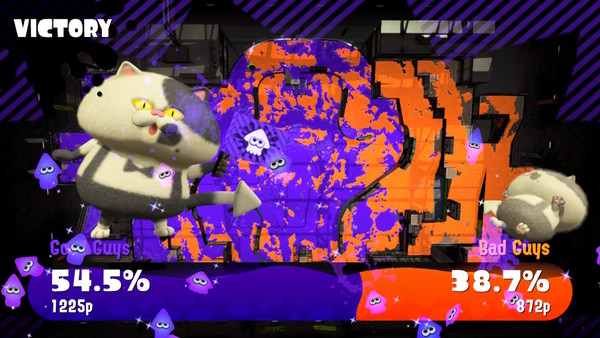

This leads to one of my favorite moments in the game, at the end of the match, when the screen displays a top-down view of the paint-covered map. It pauses there for a moment to build suspense while you eyeball this Pollock-esque splatter and try to figure out which color covers more area, then a pair of tuxedo cats (don't ask) award the game to "good guys" or "bad guys" (your team is always the "good guys"). But beyond the raw score, you can see the history of the game written on that map: this is the place someone got behind our line and painted over one of the less-traveled nooks, that is the stippling of the ink storm we fired off to clear out that sniper position, and over there is the truncated brush stroke where I was chasing someone with the paint roller when the buzzer sounded.

It's been suggested that building a shooter around an oddball mechanic like this is very typical Nintendo: just as they have built consistently profitable business around novel use of less capable (read: cheaper) graphics hardware, they carve out a niche within genres by refusing to compete directly with the standard design conventions. If you want a Halo-like, you have plenty of choices. There are few other terrain-painting shooters to compare (favorably or otherwise) with Splatoon. The closest I could imagine was Magic Carpet — and that's a wildly different game from more than two decades back.

But while this is to some extent true, none of that matters if you can't execute, and Nintendo is very good at execution. The paint mechanic at the core of Splatoon is interesting at a macro strategy level, but what actually makes it successful is that it feels great. Painting every possible surface for your team is a really satisfying thing to do. The art design is bold and colorful (as it would have to be). The music is great, and syncs up perfectly with the timer in the last minute of each match.

This has been a rough year, and the trend isn't upward. Splatoon has, to some extent, become my pomodoro exercise for anxiety: alterating a three-minute round of neon vandalism with a couple minutes checking RSS feeds for our impending doom while the lobby fills back up. The in-game experience is relentlessly upbeat and cheering — even while, apparently, the background fiction is a dark post-apocalyptic story about climate change. Welcome to 2018: even my coping strategies are terrifying.

September 16, 2017

Hacks and Hackers

Last month, I spoke at the first SeattleJS conference, and talked about how we've developed our tools and philosophy for the Seattle Times interactives team. The slides are available here, if you have trouble reading them on-screen.

I'm pretty happy with how this talk turned out. The first two-thirds were largely given, but the last section went through a lot of revision as I wrote and polished. It originally started as a meditation on training and junior developers, which is an important topic in both journalism and tech. Then it became a long rant about news organizations and native applications. But in the end, it came back to something more positive: how the web is important to journalism, and why I believe that both communities have a lot to gain from mutual education and protection.

In addition to my talk, there are a few others from Seattle JS that I really enjoyed:

- Ashley Williams: JavaScript close to the metal - a typically charming look at abstraction, operating systems, and the web platform

- Steve Kinney: Building musical isntruments with Web Audio - A really funny talk on building and playing a modular synthesizer

- Shagufta Gurmukhdas: Web-based VR - Although I'm still not convinced of the value of WebVR, I do love the way this talk shows how custom elements create a beginner-friendly language for new tech

February 14, 2017

Quantity of Care

We posted Quantity of Care, our investigation into operations at Seattle's Swedish-Cherry Hill hospital, on Friday. Unfortunately, I was sick most of the weekend, so I didn't get a chance to mention it before now.

I did the development for this piece, and also helped the reporters filter down the statewide data early on in their analysis. It's a pretty typical piece design-wise, although you'll notice that it re-uses the watercolor effect from the Elwha story I did a while back. I wanted to come up with something fresh for this, but the combination with Talia's artwork was just too fitting to resist (watch for when we go from adding color to removing it).

I did investigate one change to the effect, which was to try generating the desaturated artwork on the client, instead of downloading a heavily-compressed pattern image. Unfortunately, doing image processing in JavaScript is too heavy on the main thread, and I didn't have time to investigate moving it into a worker. I'd also like to try doing it from a WebGL shader in the future, since I suspect that's actually the best way to get it done efficiently.

In the end, I'm just happy to have worked on something that feels like such an important, powerful investigative story.

January 17, 2017

Catch Up, 2016

2016 was a busy year for interactive projects at The Seattle Times. According to our (very informal) spreadsheet, we did about 72 projects this year, about half of which were standalone. That number surprised me: at the start of the year, it felt like we were off to a slow start, but the final total isn't markedly lower than 2015, and some of those pieces were ambitious.

The big surprise of the year was Under Our Skin, a video project started by four young women in the newsroom and done almost completely under the radar. The videos themselves examine a dozen charged terms, particularly in a Seattle context, and there's a lot of smart little choices that the team made in this, such as the clever commenting prompts and the decision not to identify the respondents inline (so as not to invite pre-judgement). The editing is also fantastic. I pitched in a little on the video code and page development, and I've been working on the standalone versions for classroom use.

Perhaps the most fun projects to work on this year were with reporter Lynda Mapes, who covers environmental issues and tribal affairs for the Times. The Elwha: Roaring back to life report was a follow-up on an earlier, prize-winning look at one of the world's biggest dam removal projects, and I wrote up a brief how-to on its distinctive watercolor effects and animations. Lynda and I also teamed up to do a story on controversial emergency construction for the Wolverine fire, which involved digging through 60GB of governmental geospatial data and then figuring out how to present it to the reader in a clear, accessible fashion. I ended up re-using that approach, pairing it with SVG illustrations, for our ST3 guide.

SVG was a big emphasis for this year, actually. We re-used print assets to create a fleet of Boeing planes for our 100-year retrospective, output a network graph from Gephi to create a map of women in Seattle's art scene, and built a little hex map for a story on DEA funding for marijuana eradication. I also ended up using it to create year-end page banners that "drew" themselves, using Jake Archibald's animation technique. We also released three minimalist libraries for working with SVG: Savage Camera, Savage Image, and Savage Query. They're probably not anything special, but they work around the sharp edges of the elements with a minimal code footprint.

Finally, like much of the rest of the newsroom, our team got smaller this year. My colleague Audrey is headed to the New York Times to be a graphics editor. It's a tremendous next step, and we're very proud of her. But it will leave us trying to figure out how to do the same quality of digital work when we're down one newsroom developer. The first person to say that we just need to "do more with less" gets shipped to a non-existent foreign bureau.