February 14, 2017

Quantity of Care

We posted Quantity of Care, our investigation into operations at Seattle's Swedish-Cherry Hill hospital, on Friday. Unfortunately, I was sick most of the weekend, so I didn't get a chance to mention it before now.

I did the development for this piece, and also helped the reporters filter down the statewide data early on in their analysis. It's a pretty typical piece design-wise, although you'll notice that it re-uses the watercolor effect from the Elwha story I did a while back. I wanted to come up with something fresh for this, but the combination with Talia's artwork was just too fitting to resist (watch for when we go from adding color to removing it).

I did investigate one change to the effect, which was to try generating the desaturated artwork on the client, instead of downloading a heavily-compressed pattern image. Unfortunately, doing image processing in JavaScript is too heavy on the main thread, and I didn't have time to investigate moving it into a worker. I'd also like to try doing it from a WebGL shader in the future, since I suspect that's actually the best way to get it done efficiently.

In the end, I'm just happy to have worked on something that feels like such an important, powerful investigative story.

January 17, 2017

Catch Up, 2016

2016 was a busy year for interactive projects at The Seattle Times. According to our (very informal) spreadsheet, we did about 72 projects this year, about half of which were standalone. That number surprised me: at the start of the year, it felt like we were off to a slow start, but the final total isn't markedly lower than 2015, and some of those pieces were ambitious.

The big surprise of the year was Under Our Skin, a video project started by four young women in the newsroom and done almost completely under the radar. The videos themselves examine a dozen charged terms, particularly in a Seattle context, and there's a lot of smart little choices that the team made in this, such as the clever commenting prompts and the decision not to identify the respondents inline (so as not to invite pre-judgement). The editing is also fantastic. I pitched in a little on the video code and page development, and I've been working on the standalone versions for classroom use.

Perhaps the most fun projects to work on this year were with reporter Lynda Mapes, who covers environmental issues and tribal affairs for the Times. The Elwha: Roaring back to life report was a follow-up on an earlier, prize-winning look at one of the world's biggest dam removal projects, and I wrote up a brief how-to on its distinctive watercolor effects and animations. Lynda and I also teamed up to do a story on controversial emergency construction for the Wolverine fire, which involved digging through 60GB of governmental geospatial data and then figuring out how to present it to the reader in a clear, accessible fashion. I ended up re-using that approach, pairing it with SVG illustrations, for our ST3 guide.

SVG was a big emphasis for this year, actually. We re-used print assets to create a fleet of Boeing planes for our 100-year retrospective, output a network graph from Gephi to create a map of women in Seattle's art scene, and built a little hex map for a story on DEA funding for marijuana eradication. I also ended up using it to create year-end page banners that "drew" themselves, using Jake Archibald's animation technique. We also released three minimalist libraries for working with SVG: Savage Camera, Savage Image, and Savage Query. They're probably not anything special, but they work around the sharp edges of the elements with a minimal code footprint.

Finally, like much of the rest of the newsroom, our team got smaller this year. My colleague Audrey is headed to the New York Times to be a graphics editor. It's a tremendous next step, and we're very proud of her. But it will leave us trying to figure out how to do the same quality of digital work when we're down one newsroom developer. The first person to say that we just need to "do more with less" gets shipped to a non-existent foreign bureau.

December 13, 2016

Guns and glory

If you'd told me a few years ago that my favorite shooters in 2016 would be reboots of Doom and Wolf3D, I'd probably have been surprised, or depressed, or both. Surprised, because both of them were very much games of their time, and it would seem impossible to recreate their peculiar arcade-oriented chemistry now. Depressed, because I probably would have seen it as a part of the stagnation of the shooter genre, with which I have a love-hate relationship.

But it's true: after I upgraded my graphics card as a birthday gift to myself, I've been going back and replaying a bunch of FPS games (for better or worse, they're the graphical showcases in my Steam library), and the two standouts have been DOOM and Wolfenstein: The New Order. I'm as shocked as anyone! They're both excellent revivals of old id Software franchises, and part of what's so interesting about them is that they're excellent in such completely different ways.

Of the two, The New Order (and its prequel mini-expansion, The Old Blood), have a heavier lift: although it's vaguely connected to the 2001/2009 games, most people are only aware of the original, which was (despite mind-blowing graphics for its time) two-dimensional in both gameplay and character. They weren't great games even back then, and they haven't aged particularly well (TNO includes "nightmare" remakes of the 286 levels, in case you forgot how tedious and confusing Wolf3D could be).

The team behind TNO is the same group that made Chronicles of Riddick and The Darkness at Starbreeze, both of which took ridiculous licensed properties and stretched them past both the source material and the Steam category: Riddick asks players to engineer multiple prison-breaks with not much more than a knife, a black-market night-vision mode, and a lot of Vin Diesel dialog. Half of The Darkness is shooting light bulbs! The shape and flow of a Starbreeze game could be odd — linear chunks connecting free-form adventure hubs — but they were almost always as interesting in the downtime as they were in the action sequences.

By virtue (such as it is) of this being a Wolfenstein game, going too far outside of "open door, shoot Nazis, repeat" was probably too much to ask. But The New Order does pack a surprising amount of pathos into what is otherwise a totally bonkers 1960's alternate history in which the Fourth Reich won the war via mad science. It tilts on the action side of things, but there's still definitely a Starbreeze flavor, if you liked their other titles. Parts of it are brilliantly cinematic, including a ten-year flash-forward sequence that separates the first and second acts.

And while the gameplay doesn't go full retro, it has elements of old-school flavor. There's no regenerating health system or trendy cover-hugging mechanics here, and the use button gets a workout. My favorite refinement is the commander system, where many of the battle setpieces will introduce one or two radio-equipped officers. If you're spotted, they'll call in reinforcements, so a good approach is to stealth-kill them before mopping up the troops. Or you can play the way I do: take out one officer and then charge the other with guns blazing, before they can stack the odds too far. That this is still a viable (and fun) strategy strikes a nice balance between players who want the stealth experience, and those (like me) who are mostly in it for the instant gratification.

DOOM has no such such balance, and does not suffer for it in the slightest. There is no clip reloading for any of the weapons, and the run speed is entirely unrealistic. Unlike most shooters since Halo, everything in DOOM is designed to encourage non-stop movement toward (and through) enemies. Which is a big part of the reason why, even though it changes from its predecessor in significant ways (mouselook, a jump key, lots of upgrades and collectibles), it still manages to feel like playing deathmatch in 1996.

The two primary mechanics for maintaining player momentum in the game are a fast mantle, which gives players the ability to very quickly ascend vertically, and the "glory kills," which reward players with a half second of invulnerability (during the animation) and a quick piñata pop of health and ammo. There's no reward for staying still — in fact, like a bullet-hell shooter, players are immediately punished for remaining stationary. The goal is not to block or absorb damage, it's to avoid getting hit at all.

DOOM levels are primarily structured as a series of loosely-connected arenas, which also keeps the deathmatch feel: while hallways and platforms are used to set the mood and create checkpoints, the most intense gameplay is set in wide, 3-D spaces with multiple "circuits," just like the best Quake DM levels. Gears of War is also famous for "combat bowls" as a gameplay tool, but it strongly emphasized cover over movement, whereas DOOM almost never places waist-high walls to serve as partial cover (and they'd be useless in a game with lots of high vantage points anyway).

There's a moment at the very beginning of the game where your character, having ripped out of a set of shackles and punched through the initial set of undead, pulls up a video screen reading "DEMONIC INVASION IN PROGRESS." As mission statements go, this is pretty much DOOM in a nutshell: crank everything up to 11 and embrace the inherent, b-movie absurdity of the thing (a similar process took place music direction, which started with no guitars at all and ended up a metal shredfest).

All combined, the end result is about as pure a video game as you can get with a high-budget, AAA studio product in 2016. It's the interactive equivalent of a Fast and Furious movie: mixing comforting aesthetics (as far as the intended audience is concerned, at least) with the maximum amount of intense parallax motion. Nobody's going to mistake it for fine art — it has none of the thoughtful playfulness of, say, Dishonored — but not everything should be fine art. It's a great game.

November 7, 2016

Return values

This is a tale of two algorithms.

Every now and then, someone publishes a piece of crossover writing that blows me away. Last week, Claudia Lo at Rock Paper Shotgun examined the algorithms behind relationship simulation in the indie game Rimworld by decompiling its code:

The question we're asking is, 'what are the stories that RimWorld is already telling?' Yes, making a game is a lot of work, and maybe these numbers were just thrown in without too much thought as to how they'd influence the game. But what kind of system is being designed, that in order to 'just make it work', you wind up with a system where there will never be bisexual men? Or where all women, across the board, are eight times less likely to initiate romance?

I want to take a moment to admire Lo's writing, which takes a difficult technical subject (bias in object-oriented simulation code) and explains it in a way that's both readable and ultimately damning to its subject. It's a calm dissection of how stereotypes can get encoded (literally!) into entertainment, and even goes out of its way to be generous to the developer. I haven't talked much about games journalism in the last few years, but this is the kind of work I wish I'd thought to do when I was still writing.

In short, Lo notes that the code for Rimworld combines sexuality, gender, age, and emotional modeling in a way that's more than a little unsettling: women in the game are all bisexual and may be attracted to much older partners, while men have a narrower (and notably younger) attraction range. The game also models penalties for rejection, but not for being harassed, which leaves players trying to solve bizarre problems like "attractive lesbians that destroy community morale."

Part of what makes Lo's story interesting is how deeply this particular simulation reflects not just general social biases, but the particular biases of its developer. It took a lot more work to encode a complex, weighted system of asymmetrical attraction than it would to just use the same basic algorithm for everyone. Someone planned this very carefully — in fact, since the developer jumped into the comments on the piece with a pseudoscientific rant about the non-existence of bisexual men, we know that he's thought way too much about it. He's really invested in this view of "how people are," and very upset that anyone would think that it's just the slightest bit unrealistic or ignorant.

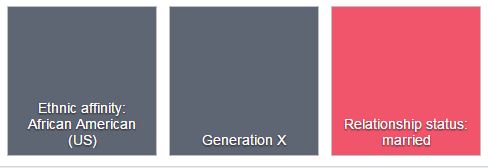

Keep that in mind when reading Pro Publica's investigation into Facebook ad preferences, in which advertisers can target ads toward (or against) particular "ethnic affinities" in violation of federal laws against discrimination in housing and other services. "Affinity" is Facebook's term, a way of hiding behind "we're not actually discriminating on race." Even for the blinkered Silicon Valley, it's astonishing that nobody thought about the ways that this could be misapplied.

The existence of these affinities may be confusing, since Facebook never actually asks about your ethnicity when setting up a profile. Instead, it generates this metadata based on some kind of machine-learning process as you use the service. As is typical for Facebook, this is not only a very bad idea, it's also very badly implemented. I know this myself: Facebook thinks I have an ethnic affinity of "African-American (US)," probably because my friend group via Urban Artistry is largely black, even though I am one of the whitest people in America (there doesn't seem to be an "Ethnic Affinity: White" tag, maybe because that's considered the default setting).

Both Facebook and Rimworld are examples of programming real-world bias into technology, but of the two it's probably Facebook that bothers me more. For the game, a developer very intentionally designed this system, and is clear about his reasons for doing so. But in Facebook's case, this behavior is partially emergent, which gives it deniability. There's no source code that we can decompile, either because it's hidden away on Facebook's servers or because it's the output of a long, machine-generated process and even Facebook can't directly "read" it. The only way to discover these problems is indirectly — such as how some news organizations are trying to collect and analyze Facebook's political ads via user reporting tools.

As more and more of the world becomes data-driven, it's easy to see how emergent behavior could reinforce existing biases if not carefully checked and guarded. When the behavior in question is Pokemon Go redlining, maybe it's not such a big deal, but when it becomes housing — or job opportunities, or access/pricing for services — the effects will be far more serious. Class action lawsuits are a reasonable start, but I think it may be time to consider stronger regulation on what personal data companies can maintain and utilize (granted, I don't have any good answers as to what that regulation would look like). After all, it's pretty clear that tech won't regulate itself.

Addendum: on journalism and public policy

I'm posting this the night before Election Day in the USA. Like a lot of newsrooms, The Seattle Times has a strict non-partisan policy for its journalists, so I can tell you to vote (please do! it's important!), but I can't tell you how I think you should vote or how I voted, and I'm discouraged from taking part in public activities that would signal bias, such as caucusing for either party. It's a condition of my employment at the paper.

Here's what I think is funny: in the post above, I lay out a strong but (I think) reasoned argument for public policy around the use of personal data. While I'm not particularly well-sourced compared to the journalists at Pro Publica, I am one of the few people at the Times who can speak knowledgeably about how databases are stored and interact. I can make this argument to you, and feel like it carries some authority behind it.

But I can only make that argument because our political parties do not (yet) have any coherent preference on how personal data should be treated. If, at some point, the use of personal data was to become politicized by one side or another, I'd no longer be free to share this argument with you — the merits of my position wouldn't have changed, nor would my expertise, but I'd no longer be considered "objective" or "unbiased."

The idea that reporters do not have opinions, or can only have opinions as long as the subjects don't matter, seems self-evidently silly to me. Data privacy is a useful thought experiment: in this case, I'm safe as long as the government never gets its act together. If that seems like a terrible way to run the fourth estate — indeed, if this sounds like a recipe for the proliferation of fact-free publishing outlets encouraged by the malicious inattention of Facebook — I can't disagree. But to tie this to my original point, however this election turns out, I think a lot of journalists are going to need to ask themselves some hard questions about the value of "objectivity" in an algorithm-powered media universe.

October 28, 2016

How I built the ST3 guide

On election day, voters in the Seattle metro area will need to choose whether or not to approve Sound Transit 3, a $54 billion funding measure that would add miles of light rail and rapid bus transit to the city over the next 20 years. It's a big plan, and our metro editor at the paper wanted to give people a better understanding of what they'd be voting on. So I worked with Mike Lindblom, the Times' transportation reporter, and Kelly Shea in our graphics department to create this interactive guide (source code).

The centerpiece of the guide is the system map, which picks out 12 of the projects that will be funded by ST3. As you scroll through the piece, each project is highlighted, and it zooms to fill the viewport. These kinds of scrolling graphics have become more common in journalism, in part because of the limitations of phone screens. When we can't prompt users to click something with hover state and have limited visual real estate, it's useful to take advantage of the most natural verb they'll have at their fingertips: scroll. I'm not wild about this UI trend, but nobody has come up with a better method yet, and it's relatively easy to implement.

The key to making this map work is the use of SVG (scalable vector graphics). SVG is the unloved stepchild of browser images — badly-optimized and only widely supported in the last few years — but it has two important advantages. First, it's an export option in Illustrator, which means that our graphics team (who do most of their work in Illustrator) can generate print and interactive assets at the same time. It's much easier to teach them how to correctly add the metadata I need in a familiar tool than it is to teach the artists an entirely new workflow, especially on a small staff (plus, we can use existing assets in a new way, like this Boeing retrospective that repurposed an old print spread).

Second, unlike raster graphic formats like JPG or PNG, SVG is actually a text-based format that the browser can control the same way that it does the HTML document. Using JavaScript and CSS, it's possible to restyle specific parts of the image, manipulate their position or size, or add/remove shapes dynamically. You can even generate them from scratch, which is why libraries like D3 have been using SVG to do data visualization for years.

Unfortunately, while these are great points in SVG's favor, there's a reason I described it in my Cascadia talk as a "box full of spiders." The APIs for interacting with parts of the SVG hierarchy are old and finicky, and they don't inherit improvements that browsers make to other parts of the page. As a way of getting around those problems, I've written a couple of libraries to make common tasks easier: Savage Query is a jQuery-esque wrapper for finding and restyling elements, and Savage Camera makes it easy to zoom and pan around the image in animated sequences. After the election, I'm planning on releasing a third Savage library, based on our <svg-map> elements, for loading these images into the page asynchronously (see my writeup on Source for details).

The camera is what really makes this map work, and it's made possible by a fun property of SVG: the viewBox attribute, which defines the visible coordinate system of a given image. For example, here's an SVG image that draws a rectangle from [10,10] to [90,90] inside of a 100x100 viewbox:

If we want to zoom in or out, we don't need to change the position of every shape in the image. Instead, we can just set the viewbox to contain a different set of coordinates. Here's that same image, but now the visible area goes from [0,0] to [500,500]:

Savage Camera was written to make it easy to manipulate the viewbox in terms of shapes, not just raw coordinates. In the case of the ST3 guide, each project description shares an ID with a group of shapes. When the description scrolls into the window, I tell the camera to focus on that group, and it handles the animation. SVG isn't very well-optimized, so on mobile this zoom is choppier than I'd like. But it's still way easier than trying to write my own rendering engine for canvas, or using a slippy map library like Leaflet (which can only zoom to pre-determined levels).

This is not the first time that I've built something on this functionality: we've also used it for our Paper Hawks and for visualizing connections between Seattle's impressive women in the arts. But this is the most ambitious use so far, and a great chance to practice working closely with an illustrator as the print graphic was revised and updated. In the future, if we can polish this workflow, I think there's a lot of potential for us to do much more interesting cross-media illustrations.

October 17, 2016

Kill 'Em and Leave 'Em, by James McBride

"Can we hit it and quit?"

When it came to rhetorical questions, nobody beat James Brown. And the more you learn about him, the more layered his shouts during "Sex Machine" become: behind the "unscripted" banter, he was a harsh and unforgiving despot to his band, driving them relentlessly through a tightly-rehearsed show. No wonder their answer is always "yeah!" Yeah, you can hit it and quit. Whatever you say, James.

It is hard, as a white person born in the 80's, to fully appreciate the impact that James Brown had on America. Michael Jackson is easier for me to grasp: I grew up in a poor, racially-diverse neighborhood in Lexington, Kentucky, and I can still remember going over to a friend's house with my brother and seeing portraits of Jackson on the wall, in much the same way that the father in The Commitments keeps a picture of Elvis hung in the living room (right above the pope). James Brown was before my time and out of my sphere, so my appreciation, while sincere, is always more cerebral than heartfelt.

But if you want to get a little closer to understanding, this decade has been a good one for books about the Godfather of Soul. R.J. Smith's endlessly quotable The One came out in 2012, and now James McBride has tackled his legacy with Kill 'Em and Leave 'Em. Despite McBride's background as a musician, this isn't a deep dive into soul music. It's also not really a biography, in part because as McBride discovered, James Brown didn't let anyone into his confidence. He kept everyone at a distance, both fans and friends. The title is a quote, from Brown to the Rev. Sharpton: "kill 'em and leave 'em," he'd say, before slipping out from his shows, unseen by the fans waiting outside.

McBride interviews everyone he can find who knew Brown, ranging from his distant cousin, to his tax lawyer, to Alfred "Pee Wee" Ellis, the bandleader during Brown's greatest hits. Many of them are reluctant to talk about him, either because the memories are painful, or because he kept them at arms length, or both. Instead, what emerges is a kind of portrait of how James Brown left his mark on American culture, by way of his friends, family, and business partners.

It's a tribute to McBride's skill that he's able to weave these disjointed, scattered viewpoints into a compelling narrative. But part of what makes the tale so gripping is that unlike a traditional biography, McBride doesn't stop with his subject's death. In his will, James Brown left millions to fund education scholarships in South Carolina and Georgia, but not a dime was spent: lawsuits from disgruntled family members, and interference from the South Carolina government, immediately tied up the fortune and eventually all but depleted it.

Before he died, Brown told his friends that they wouldn't want to be anywhere near his estate when the end came. Indeed, the fallout was colossal. His attorney and accountant, both men who had helped haul him out from under IRS investigation, were ruined in the process. For all his faults, Brown deeply cared about giving poor kids like him a leg up, and to watch the estate disintegrate this way is painful. It's a tough pill to swallow at the end of a biography.

But let's be clear: any biography that claims to frame its subject neatly for the reader is kind of a fraud anyway. Who was the real James Brown? I don't think McBride really knows — I think he'd say that nobody really knew, not even the man himself. More importantly, he hints that it may be the wrong question to ask. Kill 'Em and Leave 'Em chronicles the impact that James Brown had on those around him, how that rippled out through communities (black and otherwise), and how it continues to inspire Americans today. James Brown is gone, McBride argues, but he's still telling our story.

October 3, 2016

Designing news apps for humanity

It's been a busy few weeks, but I do at least have an article up on Source with an overview of my SRCCON session on creating more humane digital journalism.

September 9, 2016

Classless components

In early August, I delivered my talk on "custom elements in production" to the CascadiaFest crowd. We've been using these new web platform features at the Seattle Times for more than two years now, and I wanted to share the lessons we've learned, and encourage others to give them a shot. Apart from some awkward technical problems with the projector, I actually think the talk went pretty well:

One of the big changes in the web component world, which I touched on briefly, is the transition from the V0 API that originally shipped in Chrome to the V1 spec currently being finalized. For the most part, the changeover is not a difficult one: some callbacks have been renamed, and there's a new function used to register the element definition.

There is, however, one aspect of the new spec that is deeply problematic. In V0, to avoid

complicated questions around parser timing and integration, elements were only defined using

a prototype object, with the constructor handled internally and inheritance specified in the

options hash. V1 relies instead on an ES6 class definition, like so:

class CustomElement extends HTMLElement {

constructor() {

super();

}

}

customElements.define("custom-element", CustomElement);

When I wrote my presentation, I didn't think that this would be a huge problem. The conventional wisdom on classes in JavaScript is that they're just syntactic sugar for the existing prototype system — it should be possible to write a standard constructor function that's effectively identical, albeit more verbose.

The conventional wisdom, sadly, is wrong, as became clear once I started testing the V1 API currently available behind a flag in Chrome Canary. In fact, ES6 classes are not just a wrapper for prototypes: specifically, the super() call is not a straightforward translation to older inheritance models, especially when used to extend browser built-ins as it does here. No matter what workarounds I tried, Chrome's V1 custom elements implementation threw errors when passed an ES5 constructor with an otherwise valid prototype chain.

In a perfect world, we would just use the new syntax. But at the Seattle Times, we target Internet Explorer 10 and up, which doesn't support the class keyword. That means that we need to be able to write (or transpile to) an ES5 constructor that will work in both environments. Since the specification is written only in terms of classes, I did what you're supposed to do and filed a bug against the spec, asking how to write a backwards-compatible element definition.

It shouldn't surprise me, but the responses from the spec authors were wildly unhelpful. Apple's representative flounced off, insisting that it's not his job to teach people how to use new features. Google's rep closed the bug as irrelevant, stating that supporting older browsers isn't their problem.

Both of these statements are wrong, although only the second is wrong in an interesting way. Obviously, if you work on standards specifications, it is part of your job to educate developers. A spec isn't just for browsers to implement — if it were, it'd be written in a machine-readable language like WebIDL, or as a series of automated tests, not in stilted (but still recognizable) English. Indeed, the same Google representative that closed my issue previously defended the "tutorial-like" introductory sections elsewhere. Personally, I don't think a little consistency is too much to ask.

But it is the dismissal of older browsers, and the spec's responsibility to them, that I find more jarring. Obviously, a spec for a new feature needs to be free to break from the past. But a big part of the Extensible Web Manifesto, which directly references web components and custom elements, is that the platform should be explainable, and driven by feedback from real web developers. Specifically, it states:

Making new features easy to understand and polyfill introduces a virtuous cycle:

- Developers can ramp up more quickly on new APIs, providing quicker feedback to the platform while the APIs are still the most malleable.

- Mistakes in APIs can be corrected quickly by the developers who use them, and library authors who serve them, providing high-fidelity, critical feedback to browser vendors and platform designers.

- Library authors can experiment with new APIs and create more cow-paths for the platform to pave.

In the case of the V1 custom elements spec, feedback from developers is being ignored — I'm not the only person that has complained publicly about the way that the class-based definitions are a pain to use in a mixed-browser environment. But more importantly, the spec is actively hostile to polyfills in a way that the original version was not. Authors currently working to shim the V1 API into browsers have faced three problems:

- Calling super() invokes magic that's hard to reproduce in ES5, and needlessly so.

- HTMLElement isn't a callable function in older environments, and has to be awkwardly monkey-patched.

- Apple publicly opposes extending anything other than the generic HTMLElement, and has only allowed it into the spec so they can kill it later.

The end result is that you can write code that will work in old and new browsers, but it won't exactly look like real V1 code. It's not a true polyfill, more of a mini-framework that looks almost — but not exactly! — like the native API.

I find this frustrating in part for its inelegance, but more so because it fundamentally puts the lie to the principles of the extensible web. You can't claim that you're explaining the capabilities of the platform when your API is polyfill-hostile, since a polyfill is the mechanism by which we seek to explain and extend those capabilities.

More importantly, there is no surer way to slow adoption of a web feature than to artificially restrict its usage, and to refuse to educate developers on how to use it. The spec didn't have to be this way: they could detail ES5 semantics, and help people who are struggling, but they've chosen not to care. As someone who literally stood on a stage in front of hundreds of people and advocated for this feature, that's insulting.

Contrast the bullying attitude of the custom elements spec authors with the advocacy that's been done on behalf of Service Worker. You couldn't swing a dead cat in 2016 without hitting a developer advocate talking up their benefits, creating detailed demos, offering advice to people trying them out, and talking about how they gracefully degrade in older browsers. As a result, chances are good that Service Worker will ship in multiple browsers, and see widespread adoption, by the end of next year.

Meanwhile, custom elements will probably languish in relative obscurity, as they've done for many years now. It's a shame, because I'd argue that the benefits of custom elements are strong enough to justify using them even via the old V0 polyfill. I still think they're a wonderful way to build and declare UI, and we'll keep using them at the Times. But whatever wider success they achieve will be despite the spec, not because of it. It's a disgrace to the idea of an extensible web. And the authors have only themselves to blame.

August 10, 2016

RIP Chrome apps

Update: Well, that was prescient.

At least once a day, I log into the Chrome Web Store dashboard to check on support requests and see how many users I've still got. Caret has held steady for the last year or so at about 150,000 active users, give or take ten thousand, and the support and feature requests have settled into a predictable rut:

- People who can't run Caret because their version of Chrome is too old, and I've started using new ES6 features that aren't supported six browser versions back.

- People who want split-screen support, and are out of luck barring a major rewrite.

- People who don't like the built-in search/replace functionality, which makes sense, because it's honestly pretty terrible.

- People who don't like the icons, and are just going to have to get over it.

In a few cases, however, users have more interesting questions about the fundamental capabilies of developer tooling, like file system monitoring or plugging into the OS in a deeper way. And there I have bad news, because as far as I can tell, Chrome apps are no longer actively developed by the Chromium team at all, and probably never will be again.

I don't think Chrome apps are going away immediately — they're still useful and used by a lot of third-party companies — but it's pretty clear from the dev side of things that Google's heart isn't in it anymore. New APIs have ceased to roll out, and apps don't get much play at conferences. The new party line is all about progressive web apps, with browser extensions for the few cases where you need more capabilities.

Now, progressive web apps are great, and anything that moves offline applications away from a single browser and out to the wider web is a good thing. But the fact remains that while a large number of Chrome apps can become PWAs with little fuss, Caret can't. Because it interacts with the filesystem so heavily, in a way that assumes a broader ecosystem of file-based tools (like Git or Node), there's actually no path forward for it using browser-only APIs. As such, it's an interesting litmus test for just how far web apps can actually reach — not, as some people have wrongly assumed, because there's an inherent performance penalty on the web, but because of fundamental limits in the security model of the browser.

Bounding boxes

What's considered "possible" for a web app in, say, 2020? It may be easier to talk about what isn't possible, which avoids the judgment call on what is "suitable." For example, it's a safe bet that the following capabilities won't ever be added to the web, even though they've been hotly debated in and out of standards committees for years:

- Read/write file access (died when the W3C pulled the plug on the Directories part of the Filesystem API)

- Non-HTTP sockets and networking (an endless number of reasons, but mostly "routers are awful")

There are also a bunch of APIs that are in experimental stages, but which I seriously doubt will see stable deployment in multiple browsers, such as:

- Web Bluetooth (enormous security and usability issues)

- Web USB (same as Bluetooth, but with added attacks from the physical connection)

- Battery status (privacy concerns)

- Web MIDI

It's tough to get worked up about a lot of the initiatives in the second list, which mostly read as a bad case of mobile envy. There are good reasons not to let a web page have drive-by access to hardware, and who's hooking up a MIDI keyboard to a browser anyway? The physical web is a better answer to most of these problems.

When you look at both lists together, one thing is clear: Chrome apps have clearly been a testing ground for web features. Almost all the not-to-be-implemented web APIs have counterparts in Chrome apps. And in the end, the web did learn from it — mainly that even in a sandboxed, locked-down, centrally distributed environment, giving developers that much power with so little install friction could be really dangerous. Rogue extensions and apps are a serious problem for Chrome, as I can attest: about once a week, shady people e-mail me to ask if they can purchase Caret. They don't explicitly say that they're going to use it to distribute malware and takeover ads, but the subtext is pretty clear.

The great thing about the web is that it can run code without any installation step, but that's also the worst thing about it. Even as a huge fan of the platform, the idea that any of the uncountable pages I visit in any given week could access USB directly is pretty chilling, especially when combined with exploits for devices that are plugged in, like hacking a phone (a nice twist on the drive-by jailbreak of iOS 4). Access to the file system opens up an even bigger can of worms.

Basically, all the things that we want as developers are probably too dangerous to hand out to the web. I wish that weren't true, but it is.

Untrusted computing

Let's assume that all of the above is true, and the web can't safely expand for developer tools. You can still build powerful apps in a browser, they just have to be supported by a server. For example, you can use a service like Cloud 9 (now an AWS subsidiary) to work on a hosted VM. This is the revival of the thick-client model: offline capabilities in a pinch, but ultimately you're still going to need an internet connection to get work done.

In this vision, we are leaning more on the browser sandbox: creating a two-tier system with the web as a client runtime, and a native tier for more trust on the local machine. But is that true? Can the web be made safe? Is it safe now? The answer is, at best, "it depends." Every third-party embed or script exposes your users to risk — if you use an ad network, you don't have any real idea who could be reading their auth cookies or tracking their movements. The miracle of the web isn't that it is safe, it's that it manages to be useful despite how rampantly unsafe its defaults are.

So along with the shift back to thick clients has come a change in the browser vendors' attitude toward powerful API features. For example, you can no longer use geolocation or the camera/microphone in Chrome on pages that aren't served over HTTPS, with other browsers to follow. Safari already disallows third-party cookie access as a general rule. New APIs, like Service Worker, require HTTPS. And I don't think it's hard to imagine a world where an API also requires a strict Content Security Policy that bans third-party embeds altogether (another place where Chrome apps led the way).

The packaged app security model was that if you put these safeguards into place and verified the package contents, you could trust the code to access additional capabilities. But trusting the client was a mistake when people were writing Quakebots, and it stayed a mistake in the browser. In the new model, those controls are the minimum just to keep what you had. Anything extra that lives solely on the client is going to face a serious uphill battle.

Mind the gap

The longer that I work on Caret, the less I'm upset by the idea that its days are numbered. Working on a moderately-successful open source project is exhausting: people have no problems making demands, sending in random changes, or asking the same questions over and over again. It's like having a second boss, but one that doesn't pay me or offer me any opportunities for advancement. It's good for exposure, but people die from exposure.

The one regret that I will have is the loss of Caret's educational value. Since its early days, there's been a small but steady stream of e-mail from teachers who are using it in classrooms, both because Chromebooks are huge in education and because Caret provides a pretty good editor with almost no fuss (you don't even have to be signed in). If you're a student, or poor, or a poor student, it's a pretty good starter option, with no real competition for its market niche.

There are alternatives, but they tend to be online-only (like Mozilla's Thimble) or they're not Chromebook friendly (Atom) or they're completely unacceptable in a just world (Vim). And for that reason alone, I hope Chrome keeps packaged apps around, even if they refuse to spend any time improving the infrastructure. Google's not great at end-of-life maintenance, but there are a lot of people counting on this weird little ecosystem they've enabled. It would be a shame to let that die.

August 1, 2016

<slide-show>

On Thursday, I'll be giving a talk at CascadiaFest on using custom elements in production. It's kind of a sales pitch, to convince people that adopting web components is safe to do, despite the instability of the spec and the contentious politics between browsers. After all, we've been publishing with several components at the Times for almost two years now, with good results.

When I presented an early version of this at SeattleJS, I presented by scrolling through a single text file instead of slides, because I've always wanted to do that. But for Cascadia, I wanted to do something a little more special, so I built the presentation itself out of custom elements, with the goal that it would demonstrate how to write code that works with both versions of the spec. It's also meant to be a good example for someone who's just learning how web components function — I use pretty much every custom elements feature at one point or another in 300 lines of code. You can take a look at the source for it here.

There are several strategies that I ended up emphasizing while writing the <slide-show> elements, primarily the heavy use of events to tame asynchronicity. It turns out that between V0, V1, and the two major polyfills, elements and their attributes are resolved by the parser with entirely different timing. It's really important that child elements notify their parent when they upgrade, and parents shouldn't assume that children are ready at startup.

One way to deal with asynchronous upgrades is just to put all your functionality in the parent element (our <leaflet-map> does this), but I wanted to make these slides easier to extend with new types (such as text, code, or image slides). In this case, the slide show looks for a parsedContent property on the current slide, and it's the child's job to populate and update that value. An earlier version called a parseContents() method, but using properties as "duck-typing" makes it much easier to handle un-upgraded elements, and moving the responsibility to the child also greatly simplified the process of watching slide contents for changes.

A nice side effect of using live properties and events is that it "feels" a lot more like a built-in element. The modern DOM API is built on similar primitives, so writing the glue code for the UI ended up being very pleasant, and it's possible to interact using the dev tools in a natural way. I suspect that well-built component libraries in the future will be judged on how well they leverage a declarative interface to blend in with existing elements.

Ironically, between child elements and Shadow DOM, it's actually much harder to move between different polyfills than it is to write an element definition for both the new and old specifications. We've always written for Giammarchi's registerElement shim at the Times, and it was shocking for me to find out that Polymer's shim not only diverges from its counterpart, but also differs from Chrome's native implementation. Coding around these differences took a bit of effort, but it's probably work I should have done at the start, and the result is quite a bit nicer than some of the hacks I've done for the Times. I almost feel like I need to go back now and update them with what I've learned.

Writing this presentation was a good way to make sure I was current on the new spec, and I'm actually pretty happy with the way things have turned out. When WebKit started prototyping their own API, I started to get a bit nervous, but the resulting changes are relatively minor: some property names have changed, the lifecycle is ordered a bit differently, and upgrade code is called in the constructor (to encourage using the class syntax) instead of from a createdCallback() method. Most of these are positive alterations, and while there are some losses going from V0 to V1 (no is attribute to subclass arbitrary elements), they're not dealbreakers. Overall, I'm more optimistic about the future of web components than I have in quite a while, and I'm looking forward to telling people about it at Cascadia!